Next Generation Legal FIR Analysis: AI-based Summarization and Section Identification

DOI:

https://doi.org/10.31033/IJEMR/16.2.2026.1854Keywords:

FIR Analysis, Legal AI, Semantic Retrieval, Zero-Shot ClassificationAbstract

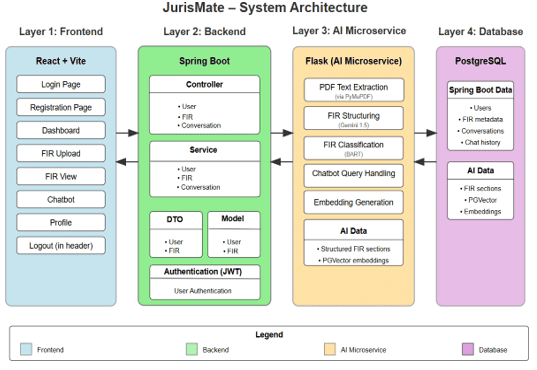

The increasing number and complexity of first information reports (firs) have made manual legal analysis time-consuming and error-prone. Although firs are essential to criminal investigations, their unstructured format and varied language often make legal interpretation difficult. This paper introduces jurismate, an ai-based system that helps automate the analysis of firs. Jurismate uses the gemini 1.5 language model to extract and structure important details from fir documents and applies a bart-based zero-shot classification method to determine whether an fir is lawful, unlawful, or unclear without requiring labeled data. To support legal research, the system uses semantic embeddings stored in pgvector to retrieve relevant laws and past cases based on similarity. Experimental results show that jurismate improves efficiency, ensures consistent analysis, and provides better support for legal decision-making.

Downloads

References

K. D. Ashley. (1991). Reasoning with cases and hypotheticals in HYPO. International Journal of Man-Machine Studies, 34(6), 753–796.

K. Lipianina-Honcharenko, O. Honcharenko, & Y. Savytskyi. (2024). A cyclical approach to legal document analysis: Leveraging AI for strategic policy evaluation. In: CEUR Workshop Proceedings, 3612, pp. 1–12.

M. S. Kabir, & M. N. Alam. (2023). The role of artificial intelligence technology for legal research and decision making. International Research Journal of Engineering and Technology (IRJET), 10(5), 1450–1456.

I. Chalkidis, I. Androutsopoulos, & N. Aletras. (2019). Neural legal judgment prediction in English. In: Proc. 57th Annual Meeting of the Association for Computational Linguistics, pp. 4317–4323.

H. Zhong, C. Xiao, C. Tu, T. Zhang, Z. Liu, & M. Sun. (2018). Legal judgment prediction via topological learning. In: Proc. 2018 Conference on Empirical Methods in Natural Language Processing (EMNLP), pp. 3540–3549.

J. Devlin, M.-W. Chang, K. Lee, & K. Toutanova. (2019). BERT: Pre-training of deep bidirectional transformers for language understanding. In: Proc. NAACL-HLT, Minneapolis, pp. 4171–4186.

M. Lewis, Y. Liu, & N. Goyal, et al.(2020). BART: Denoising sequence-to-sequence pre-training for natural language generation, translation, and comprehension. In: Proc. ACL, pp. 7871–7880.

N. Reimers, & I. Gurevych. (2019). Sentence-BERT: Sentence embeddings using Siamese BERT-networks. In: Proc. EMNLP-IJCNLP, pp. 3982–3992.

J. Johnson, M. Douze, & H. Jégou. (2021). Billion-scale similarity search with GPUs. IEEE Transactions on Big Data, 7(3), pp. 535–547.

K. D. Ashley, & S. Brüninghaus. (2009). Automatically classifying case texts and predicting outcomes. Artificial Intelligence and Law, 17(2), 125–165.

Published

How to Cite

Issue

Section

License

Copyright (c) 2026 Loshini K, Deepasangkini K, Tharun S, K.S. Janu, D.Parameswari

This work is licensed under a Creative Commons Attribution 4.0 International License.

Research Articles in 'International Journal of Engineering and Management Research' are Open Access articles published under the Creative Commons CC BY License Creative Commons Attribution 4.0 International License http://creativecommons.org/licenses/by/4.0/. This license allows you to share – copy and redistribute the material in any medium or format. Adapt – remix, transform, and build upon the material for any purpose, even commercially.