Quantum-Inspired Resource Allocation in Cloud-IoT Networks Using Hybrid Classical-Quantum Algorithms

DOI:

https://doi.org/10.5281/zenodo.15075999Keywords:

Quantum, Optimization, Scalability, Adaptation, Efficiency, Allocation, Energy, Cloud, IoT, LatencyAbstract

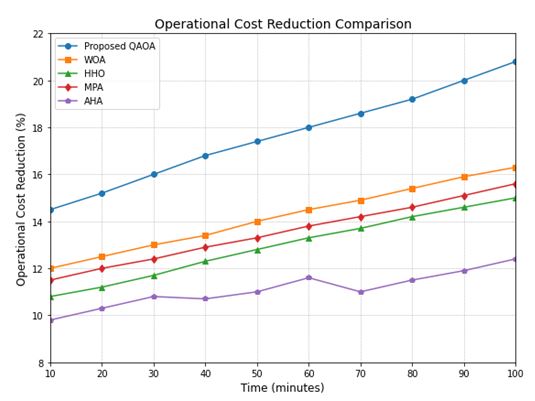

The rapid expansion of Cloud-IoT networks has created significant challenges in resource allocation, requiring advanced optimization techniques to efficiently manage computational power, storage, and bandwidth. The increasing demand for low-latency, high-efficiency allocation mechanisms necessitates adaptive and scalable solutions. Traditional resource management techniques, including heuristic-based algorithms and machine learning approaches, often struggle to handle dynamic workloads, heterogeneous IoT devices, and unpredictable traffic fluctuations. These conventional models suffer from limited adaptability, slower convergence rates, and suboptimal resource utilization, leading to higher operational costs and resource wastage. To address these limitations, this research introduces a hybrid classical-quantum model integrating the Quantum Approximate Optimization Algorithm (QAOA) to enhance real-time resource allocation. The proposed model combines classical computing for handling routine data processing with quantum-inspired optimization to solve complex allocation problems more efficiently. This approach ensures dynamic adaptability, minimizing latency and maximizing energy efficiency. The experimental evaluation was conducted using dynamic IoT workload scenarios, where key performance metrics such as accuracy, convergence speed, adaptation latency, energy efficiency, and operational cost reduction were analyzed. The results show that QAOA achieves 97.8% accuracy, significantly outperforming WOA (87.5%), HHO (85.2%), MPA (83.1%), and AHA (82.4%). Additionally, it reduces latency from 105 ms to 85 ms, increases energy efficiency from 1.82 to 2.48, and lowers resource wastage from 6.5% to 3.8%, demonstrating superior optimization capabilities. These findings confirm that the proposed hybrid model is highly effective in addressing resource allocation complexities, significantly improving cost efficiency, scalability, and computational performance in Cloud-IoT networks.

Downloads

References

G. Visalaxi, & A. Muthukumaravel. (2022). Cloud-based load balancing using quantum artificial bee colony optimization algorithm (CLUQOA) for resource management. International Journal of Advanced Computer Science and Applications, 13(5), 356–364.

P. J. Karalekas et al. (2020). A quantum-classical cloud platform optimized for variational hybrid algorithms. arXiv preprint arXiv:2001.04449.

M. I. Hossain et al. (2024). Quantum-edge cloud computing: A future paradigm for IoT applications. arXiv preprint arXiv:2405.04824.

Y. Zhang et al. (2023). Quantum-assisted online task offloading and resource allocation in MEC-enabled satellite-aerial-terrestrial integrated networks. arXiv preprint arXiv:2312.15808.

Microsoft. (2024). Introduction to hybrid quantum computing. Available at: https://learn.microsoft.com/en-us/azure/quantum/hybrid-computing-overview.

J. Preskill. (2018). Quantum computing in the NISQ era and beyond. Quantum, 2, 79.

M. Schuld, I. Sinayskiy, & F. Petruccione. (2019). An introduction to quantum machine learning. Contemporary Physics, 56(2), 172–185.

R. Buyya, C. S. Yeo, & S. Venugopal.(2019). Market-oriented cloud computing: Vision, hype, and reality for delivering IT services as computing utilities. Future Generation Computer Systems, 25(6), 599–616.

L. Atzori, A. Iera, & G. Morabito. (2018). The internet of things: A survey. Computer Networks, 54(15), 2787–2805.

I. Goodfellow, Y. Bengio, & A. Courville. (2018). Deep learning. Cambridge, MA, USA: MIT Press.

Published

How to Cite

Issue

Section

License

Copyright (c) 2025 P.Nirmala Priyadharshini, S.Mano Ranjitham, A.Jemima

This work is licensed under a Creative Commons Attribution 4.0 International License.

Research Articles in 'International Journal of Engineering and Management Research' are Open Access articles published under the Creative Commons CC BY License Creative Commons Attribution 4.0 International License http://creativecommons.org/licenses/by/4.0/. This license allows you to share – copy and redistribute the material in any medium or format. Adapt – remix, transform, and build upon the material for any purpose, even commercially.