Fraudshield – Deepfake Detection Tools

DOI:

https://doi.org/10.5281/zenodo.15314707Keywords:

Deepfake Detection, Convolutional Neural Networks (CNN), Image Forgery, Machine Learning, Fraud Detection, Digital Security, Adversarial Deepfake, Multimedia ForensicsAbstract

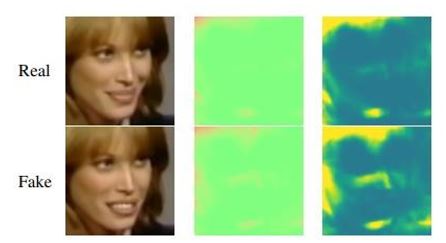

FraudShield is a web application designed to detect and mitigate the impact of deepfakes, ensuring content authenticity and integrity. With the rise of image manipulation and deepfake videos, detecting fraudulent activities has become increasingly critical. This project introduces a hybrid detection system that integrates Convolutional Neural Networks (CNNs) to identify morphed images and manipulated content. The framework leverages machine learning techniques to detect tampered facial features, artifacts, and inconsistencies in deepfake videos and images. The CNN component analyzes visual features such as texture inconsistencies and pixel anomalies to detect image morphing or tampering. FraudShield employs a multi-stage CNN pipeline that extracts spatial and temporal features from images and video frames, enhancing its ability to identify synthetic forgeries. The system is trained on large-scale datasets to improve robustness against adversarial deepfakes. By utilizing this approach, the model enhances detection accuracy while minimizing false positives and false negatives. The hybrid model strengthens online security by offering a comprehensive fraud detection solution. Its scalable architecture enables adaptation to emerging fraud patterns and new types of image manipulation. Ultimately, the dual-layered system provides a reliable and efficient tool for identifying image tampering, reinforcing digital security.

Downloads

References

Tolosana, R., et al. (2020). DeepFakes and beyond: A survey of face manipulation and fake detection. Information Fusion, 64, 131–148.

Korshunov, P., & Marcel, S. (2018). DeepFakes: A new threat to face recognition? Assessment and detection. arXiv preprint arXiv:1812.08685.

Rossler, A., et al. (2019). FaceForensics++: Learning to detect manipulated facial images. Proceedings of the IEEE International Conference on Computer Vision (ICCV), pp. 1–11.

Nguyen, H. H., et al. (2021). Deep learning for Deepfake detection: Analysis and perspectives. Neural Networks, 140, 1–22.

Wang, S., et al. (2020). Video-based Deepfake detection using recurrent neural networks. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR).

Verdoliva, L. (2020). Media forensics and DeepFakes: An overview. IEEE Journal of Selected Topics in Signal Processing, 14(5), 910–932.

Afchar, D., et al. (2018). Mesonet: A compact facial video forgery detection network. IEEE International Workshop on Information Forensics and Security (WIFS), pp. 1–7.

Li, Y., et al. (2019). Exposing DeepFake videos by detecting face warping artifacts. Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR) Workshops.

Hsu, C. C., et al. (2021). Deepfake image detection based on two-stream convolutional neural networks. IEEE Access, 9, 120317–120325.

Dolhansky, B., et al. (2020). Deepfake detection challenge dataset. arXiv preprint arXiv:2006.07397.

Published

How to Cite

Issue

Section

License

Copyright (c) 2025 Pankhuri Tripathi, Shikha Singh, Anubhav Nishad, Farheen Siddiqui

This work is licensed under a Creative Commons Attribution 4.0 International License.

Research Articles in 'International Journal of Engineering and Management Research' are Open Access articles published under the Creative Commons CC BY License Creative Commons Attribution 4.0 International License http://creativecommons.org/licenses/by/4.0/. This license allows you to share – copy and redistribute the material in any medium or format. Adapt – remix, transform, and build upon the material for any purpose, even commercially.