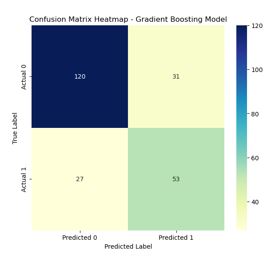

Comparative Analysis of Machine Learning Models for Diabetes Prediction

DOI:

https://doi.org/10.5281/zenodo.15867611Keywords:

Diabetes Prediction, Machine Learning, Logistic Regression, Random Forest, Gradient Boosting, Linear Regression, Predictive AnalyticsAbstract

Diabetes is a chronic health condition affecting millions worldwide, and early detection plays a vital role in effective disease management and prevention. In this study, we conduct a comparative analysis of four machine learning models—Logistic Regression, Random Forest, Gradient Boosting, and Linear Regression—applied to the Pima Indian Diabetes dataset obtained from Kaggle. The dataset comprises diagnostic measurements of female patients aged 21 and above of Pima Indian heritage. Each model is evaluated using key classification metrics, including accuracy, precision, recall, and F1-score. Among the models, Logistic Regression and Gradient Boosting achieved the highest accuracy of 75%, while Random Forest and Linear Regression showed slightly lower performance at 72% and 73.16%, respectively. The study highlights the effectiveness of ensemble methods and traditional classifiers in predicting diabetes outcomes and provides insight into their relative strengths for clinical decision support systems. These results suggest that machine learning can be a valuable tool in aiding early diagnosis and improving patient care strategies.

Downloads

References

International Diabetes Federation. (2021). IDF diabetes atlas. (10th ed.). Brussels, Belgium. Retrieved from: https://diabetesatlas.org/.

Rajkomar, A., Dean, J., & Kohane, I. (2019). Machine learning in medicine. New England Journal of Medicine, 380(14), 1347–1358.

Topol, E. J. (2019). High-performance medicine: The convergence of human and artificial intelligence. Nature Medicine, 25(1), 44–56.

Kaggle. (n.d.). Pima Indians diabetes database. Retrieved May 6, 2025, from: https://www.kaggle.com/datasets/uciml/pima-indians-diabetes-database.

Hosmer, D. W., Lemeshow, S., & Sturdivant, R. X. (2013). Applied logistic regression. (3rd ed.). Wiley.

Breiman, L. (2001). Random forests. Machine Learning, 45(1), 5–32.

Faraway, J. J. (2016). Linear models with R. (2nd ed.). CRC Press.

Friedman, J. H. (2001). Greedy function approximation: A gradient boosting machine. Annals of Statistics, 29(5), 1189–1232.

Published

How to Cite

Issue

Section

License

Copyright (c) 2025 Pratiksha Patil, Deepali Lawand, Mohit Gambas, Deepak Gaikwad

This work is licensed under a Creative Commons Attribution 4.0 International License.

Research Articles in 'International Journal of Engineering and Management Research' are Open Access articles published under the Creative Commons CC BY License Creative Commons Attribution 4.0 International License http://creativecommons.org/licenses/by/4.0/. This license allows you to share – copy and redistribute the material in any medium or format. Adapt – remix, transform, and build upon the material for any purpose, even commercially.